A Japanese AI voice can turn a simple script into a polished voiceover in minutes. Whether you are dubbing a YouTube video, narrating an e-learning course, or building a character for a game, the technology behind synthetic Japanese speech has reached a point where listeners often cannot tell the difference between AI and a human speaker.

This guide walks you through every step of creating natural-sounding Japanese AI voiceovers, from understanding the underlying technology to fine-tuning pitch, accent, and emotion.

Japan’s voice synthesis market is growing fast. According to a report from Grand View Research, the global text-to-speech market was valued at USD 4.4 billion in 2023 and is expected to grow at a compound annual growth rate of 14.6% through 2030.

Japanese-language TTS is a significant slice of that growth, driven by demand from anime studios, gaming companies, virtual YouTubers, and corporate training departments across Asia.

This post covers everything you need to know. Let’s get into it.

Why Japanese AI voiceovers are in demand

The use cases for synthetic Japanese speech have multiplied over the past three years. Here are the main drivers.

- Content localization. Global brands need Japanese audio for ads, apps, and product demos. Hiring voice actors for every update is slow and expensive.

- Anime and gaming. Independent creators want authentic Japanese voices for characters without booking studio time. Fans around the world expect Japanese audio as an option.

- E-learning. Japanese companies and language schools use AI narration to produce training modules at scale.

- Accessibility. Screen readers and navigation systems in Japan rely on TTS engines that sound clear and natural.

- Virtual streamers. VTubers and live streamers use real-time voice synthesis to maintain a consistent character voice during broadcasts.

The common thread is speed. A professional voice actor session in Tokyo can take days to schedule and hours to record. An AI voice generates the same output in seconds.

How Japanese AI voice technology works

At a high level, modern Japanese voice generators convert text into speech using deep learning models trained on large datasets of recorded human speech. The process has three main stages.

Text analysis

The system reads the input text and figures out how to pronounce each word. Japanese is written in a mix of kanji, hiragana, katakana, and sometimes romaji. The engine must parse all four scripts correctly.

It also handles word boundaries. Japanese does not use spaces between words, so the model needs a morphological analyzer to segment sentences. Tools like MeCab or Sudachi break text into tokens before the acoustic model takes over.

Acoustic modeling

This is where the voice gets its character. The acoustic model predicts mel-spectrograms or other audio representations from the processed text. Modern systems use transformer-based architectures or diffusion models.

Google’s research on SoundStorm showed that parallel decoding of audio tokens can produce high-quality speech faster than autoregressive methods. These advances trickle down into commercial TTS products within a year or two.

Vocoder

The vocoder converts the predicted spectrogram into an actual audio waveform. Neural vocoders like HiFi-GAN produce crisp, artifact-free audio. The result is a WAV or MP3 file you can drop into your video editor.

Each stage has improved dramatically since 2022. The gap between robotic-sounding TTS and natural human speech has narrowed to the point where casual listeners often cannot distinguish the two.

What makes Japanese speech unique

Creating a natural Japanese AI voice is harder than doing the same in English. The language has features that trip up generic TTS engines.

Pitch accent

Japanese is a pitch-accent language, not a stress-accent language like English. The meaning of a word can change based on whether the pitch rises or falls. For example, “hashi” with a high-low pattern means “chopsticks,” while a low-high pattern means “bridge.”

A good Japanese voice generator handles pitch accent at the word level and adjusts it based on sentence context. If you want to learn more about how TTS systems manage these patterns, read our guide on TTS Japanese.

Particles and intonation

Sentence-ending particles like “ne,” “yo,” “ka,” and “wa” carry emotional weight. A question particle should trigger a rising intonation. A casual “ne” at the end of a statement adds warmth. The AI model needs training data that captures these nuances.

Politeness levels

Japanese has formal (keigo), polite (desu/masu), and casual speech registers. Each register changes not just vocabulary but also rhythm, speed, and tone. A corporate training video requires polite speech. An anime character might use rough, informal Japanese.

The best engines let you select a voice that matches the register you need, or they adjust automatically based on the input text’s formality.

Long vowels and geminate consonants

Stretching a vowel or doubling a consonant changes meaning. “Obasan” (aunt) versus “obaasan” (grandmother). “Kite” (come) versus “kitte” (stamp). The TTS system must get the duration right, or the output sounds wrong to native speakers.

Step-by-step guide to creating Japanese AI voiceovers

Here is a practical workflow. Follow these steps to go from a blank page to a finished audio file.

Step 1: Write or prepare your script

Start with clean, well-punctuated Japanese text. If you are writing in English first and translating, use a professional translator or a reliable machine translation tool, then have a native speaker review it.

Tips for script preparation:

- Write in the correct script. Use kanji where appropriate. Do not romanize everything.

- Add furigana or reading hints for uncommon kanji if your TTS tool supports it.

- Mark pauses with punctuation. A comma (、) creates a short pause. A period (。) creates a longer one.

- Keep sentences short. Long, compound sentences sound unnatural when read aloud by any TTS engine.

Step 2: Choose the right voice

Most Japanese voice generator platforms offer multiple voices. You will typically see options like these:

- Male or female

- Young or mature

- Formal or casual

- Character-specific (anime style, narrator style, news anchor style)

Pick a voice that fits your content. A product demo needs a calm, professional tone. A game character needs personality. Platforms like Typecast offer a realistic AI voice generator with a library of Japanese voices suited to different use cases, from corporate narration to expressive character work.

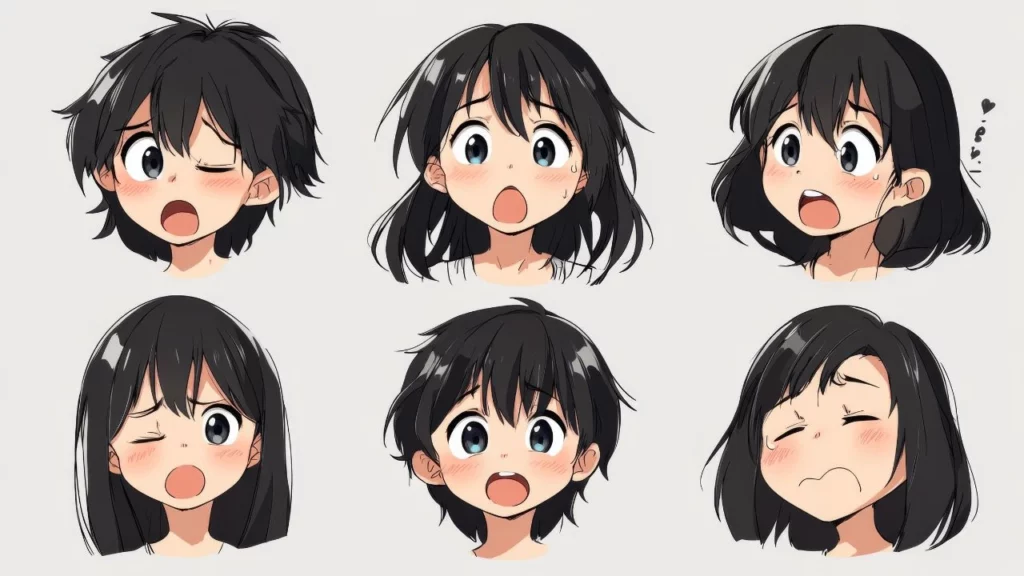

If you are building voices for anime-style content, an anime voice generator can give you purpose-built options with the right tonal characteristics.

Step 3: Generate a first draft

Paste your script into the TTS tool and generate the audio. Listen to the full output without interrupting. Take notes on problem areas:

- Mispronounced words

- Unnatural pauses

- Wrong pitch accent on specific words

- Flat emotional delivery in sections that need emphasis

Step 4: Adjust pronunciation and reading

Most tools let you override how specific words are read. If the engine reads a kanji compound incorrectly, you can input the correct reading in hiragana or katakana.

For example, the kanji 生 can be read as “sei,” “shou,” “nama,” “i(kiru),” “u(mareru),” and several other ways depending on context. If the engine picks the wrong reading, manually correct it.

Step 5: Tune pitch and speed

This is where you shape the voiceover’s feel. Adjust these parameters:

- Speed. Slow down for instructional content. Speed up slightly for energetic ads.

- Pitch. Raise the baseline pitch for a younger-sounding voice. Lower it for authority.

- Pauses. Insert or lengthen pauses between sections. This gives listeners time to process information.

Small adjustments make a big difference. A 5% reduction in speed can turn a rushed-sounding narration into a composed one.

Step 6: Add emotion and emphasis

Advanced platforms allow you to tag portions of text with emotional markers. You might mark a sentence as “happy,” “serious,” “surprised,” or “sad.” The engine modifies its delivery accordingly.

If your tool does not support emotion tags, you can work around it by adjusting punctuation and sentence structure. Exclamation marks and shorter sentences push the AI toward a more energetic delivery. Longer, comma-heavy sentences produce a calmer tone.

Step 7: Export and post-process

Export the audio in the highest quality format available, usually WAV at 44.1 kHz or 48 kHz. You can always compress to MP3 later.

Post-processing in a DAW (digital audio workstation) like Audacity, Adobe Audition, or DaVinci Resolve’s Fairlight can improve the final product:

- Normalize volume levels.

- Apply light EQ to match the audio to your video’s soundtrack.

- Add subtle reverb if the voice needs to sound like it is in a room rather than a recording booth.

- Remove any artifacts or clicks the TTS engine might have introduced.

Common mistakes to avoid

Creators new to Japanese AI voiceovers tend to make the same errors. Here are the ones to watch for.

Using machine-translated scripts without review

Google Translate and DeepL have improved, but they still produce awkward phrasing in Japanese. A sentence that is grammatically correct can still sound unnatural to a native ear. Always have a fluent speaker check the script before you feed it to the TTS engine.

Ignoring pitch accent entirely

English speakers often do not realize pitch accent exists. They hear the AI output and think it sounds “fine” because they lack the context to notice errors. Native Japanese listeners will catch every mistake. Use a pitch accent dictionary like the NHK日本語発音アクセント辞典 as a reference.

Choosing the wrong voice for the context

A cute, high-pitched anime voice does not work for a bank’s customer service line. A deep, formal narrator voice does not work for a children’s app. Match the voice to the audience and the setting.

Over-processing the audio

Heavy reverb, aggressive compression, and layered effects can make AI speech sound artificial again. Keep post-processing minimal. The goal is to let the natural quality of the voice come through.

Generating one long audio file

Break your script into sections and generate each one separately. This gives you more control over pacing. It also makes re-recording a single sentence faster, since you do not need to regenerate the entire file.

Comparing Japanese AI voice platforms

Several platforms offer Japanese voice generation. Each has strengths and trade-offs. Here is what to look for when evaluating them.

Voice quality

Listen for naturalness. Does the voice sound like a real person reading the text? Or does it have a robotic edge? Pay attention to how it handles particles, pitch accent, and sentence-final intonation.

Language support depth

Some platforms treat Japanese as an afterthought. They may have one or two Japanese voices while offering dozens of English ones. Look for platforms with multiple Japanese voice options across genders, ages, and speaking styles.

Customization options

Can you adjust speed, pitch, and emotion? Can you correct readings for specific kanji? Can you add pauses where you need them? The more control you have, the better your output will be.

Output format and quality

Check the sample rate and bit depth of exported files. For professional video work, you need at least 44.1 kHz, 16-bit WAV. Some platforms only offer compressed MP3, which limits your post-production options.

Pricing

Models vary. Some charge per character, some per minute of generated audio, and some offer monthly subscriptions with usage limits. Calculate how much audio you will need per month and compare costs accordingly.

For a broader look at available options, our overview of the best Japanese voice generator tools breaks down the top five platforms.

Use cases explored

Let’s look at how different creators use Japanese AI voiceovers in practice.

YouTube and social media content

Creators who make Japan travel vlogs, language learning content, or cultural explainers often need Japanese narration. AI voices let them produce bilingual videos without hiring a second narrator.

A creator might record the English narration themselves and use an AI voice for the Japanese sections. The result is a cohesive video that serves both English-speaking and Japanese-speaking audiences.

Anime fan projects and indie games

Independent game developers and fan dubbing groups use AI voices to prototype dialogue. Before investing in professional voice actors, they generate AI voiceovers to test how the script sounds in context.

Some indie games ship with AI voices as the final product. The quality of today’s engines is high enough for many players to accept synthetic speech, especially in visual novels and RPGs, where large volumes of dialogue make full voice acting prohibitively expensive.

If you are working on anime-style character voices specifically, our article on AI voice anime characters covers the tools and techniques that work best.

Corporate training and internal communications

Japanese companies use AI voiceovers for onboarding videos, compliance training, and internal announcements. The advantage is consistency. Every module uses the same voice, tone, and pacing. Updates are instant: change the script, regenerate the audio, and publish.

Audiobooks and podcasts

Japanese audiobook production is growing. AI narration makes it feasible to convert backlist titles into audio format without the cost of studio sessions. The key challenge is maintaining listener engagement over long durations, which requires careful tuning of pacing and emotional variation.

Some podcast creators use AI voices for recurring segments or secondary narrators, blending human and synthetic voices within a single episode.

Accessibility applications

Screen readers for visually impaired users in Japan depend on high-quality TTS. Navigation apps, ATM interfaces, and public transit announcements all use synthesized speech. Naturalness matters here because users interact with these voices constantly.

The role of emotion and expressiveness

Flat speech is the biggest complaint about older TTS systems. Modern engines address this with emotion modeling.

The approach varies by platform. Some use discrete emotion labels (happy, sad, angry, surprised). Others use continuous sliders for arousal (energy level) and valence (positive vs. negative). A few allow you to provide a reference audio clip and ask the model to match its style.

For Japanese specifically, emotional expression is tied to formality. A speaker expressing surprise in casual speech sounds very different from one expressing surprise in formal speech. The best models account for this interaction.

Stanford’s AI Index Report found that speech synthesis quality improved significantly year over year, with tonal and pitch-accent languages like Japanese seeing some of the largest gains.

Working with character voices

Creating distinct character voices is one of the more creative applications of Japanese AI speech. If you are building a game, animation, or interactive story, each character needs a recognizable voice.

Here are factors that differentiate character voices:

- Pitch range. A gruff warrior speaks low. A cheerful schoolgirl speaks high.

- Speed. An anxious character speaks fast. A wise elder speaks slowly.

- Breathiness. Some characters have a breathy, soft delivery. Others are sharp and clipped.

- Speech patterns. Sentence-ending habits, filler words, and dialect markers all shape identity.

Some platforms let you clone or customize voices to create unique characters. Others offer preset character archetypes you can select from a library.

For creators working on anime-style projects who need a female character voice, our guide to anime girl voice text-to-speech walks through the specific options and settings available.

Handling dialects and regional speech

Standard Japanese (hyojungo) is based on Tokyo dialect and is what most TTS engines produce. But Japan has dozens of regional dialects: Kansai-ben, Tohoku-ben, Hakata-ben, Okinawan, and many others.

Dialect support in AI voices is still limited. Most engines handle standard Japanese well but struggle with regional speech patterns. If your project requires a Kansai dialect, you may need to:

- Write the script phonetically in how a Kansai speaker would say it.

- Manually adjust pitch patterns to match the dialect’s intonation.

- Accept that some naturalness will be lost compared to a human dialect speaker.

This is an area where the technology still has room to grow. As datasets expand to include more regional speech, dialect support will improve.

Legal and ethical considerations

Using AI voices raises questions about rights and disclosure.

Voice likeness rights

In Japan, the right of publicity (パブリシティ権) protects individuals from unauthorized commercial use of their likeness, including their voice. Using an AI voice that is modeled after a specific person without their consent can create legal risk.

Stick to voices that are either original creations or licensed from consenting voice actors. Most reputable platforms handle this licensing on their end.

Disclosure

Some jurisdictions and platforms require you to disclose when audio is AI-generated. YouTube, for example, introduced labels for synthetic content in 2024. Check the rules for your distribution platform and comply with them.

Copyright on AI-generated audio

The copyright status of AI-generated content varies by country. In Japan, the Agency for Cultural Affairs has issued guidance suggesting that AI-generated works may qualify for copyright protection if a human exercised creative control over the output. Consult a legal professional if your project has commercial stakes.

Optimizing voiceovers for different media

The same script may need different audio treatments depending on where it will be used.

Video

- Match the voiceover speed to the visual pacing. Fast cuts need shorter sentences.

- Leave room for sound effects and music. Do not fill every second with narration.

- Export at the same sample rate as your video project (usually 48 kHz for video, 44.1 kHz for web-only).

Podcasts and audiobooks

- Use a consistent voice throughout. Switching voices between episodes can confuse listeners.

- Add slightly longer pauses between paragraphs to give the ear a break.

- Normalize loudness to -16 LUFS for podcasts or -14 LUFS for audiobooks (per ACX standards).

Apps and interfaces

- Keep utterances short. No one wants a 30-second AI monologue from a navigation app.

- Pre-generate common phrases for faster playback. Real-time synthesis adds latency.

- Test on the actual device. Phone speakers and car speakers reproduce voice differently than studio monitors.

Games

- Generate multiple takes of the same line with different emotional intensities. This gives you options during dialogue scripting.

- Consider how the voice will sound with game audio playing simultaneously. A voice that sounds clear in isolation might get lost under a loud soundtrack.

Future trends in Japanese AI voice technology

The field is moving quickly. Here is what to expect in the next two to three years.

Real-time voice synthesis

Latency is dropping. Current engines can generate speech in near real-time, making live applications like VTuber streaming and interactive customer service viable. By 2026, expect sub-200ms latency for most commercial platforms.

Multilingual code-switching

Japanese speakers frequently mix in English words and phrases. Future models will handle this code-switching seamlessly, pronouncing English loanwords with appropriate Japanese phonology or switching to English pronunciation when the context calls for it.

Personalized voice creation

Instead of choosing from a preset library, users will be able to create custom voices by providing a few minutes of reference audio. This is already possible in some English-language tools and will expand to Japanese.

Better dialect coverage

As mentioned earlier, dialect support is limited today. Expect dedicated Kansai, Kyushu, and Hokkaido voice options to appear as training data becomes more diverse.

Integration with video and animation tools

Voice generation will be embedded directly into video editing and animation software. Instead of exporting audio from one tool and importing it into another, creators will generate and adjust voiceovers inside their existing workflow.

Practical tips for getting the best results

To wrap up the how-to portion, here is a quick reference list.

- Write scripts specifically for spoken delivery. Read them aloud in your head before generating.

- Generate short segments, not the entire script at once.

- Compare at least three different voices before committing to one.

- Listen to your output on multiple devices: headphones, phone speaker, laptop speaker.

- Ask a native Japanese speaker to review the final audio for naturalness.

- Keep a log of pronunciation corrections so you do not have to fix the same words in future projects.

- Save your project settings (voice, speed, pitch) so you can maintain consistency across multiple videos or episodes.

- Update your workflow as platforms release new voices and features. What sounded state-of-the-art six months ago may already be outdated.

The tools are accessible, the quality is high, and the learning curve is short. The main investment is time spent learning what sounds right in Japanese, which comes from listening, iterating, and paying attention to feedback from native speakers.