Getting TTS Japanese right is harder than most people think. Unlike English, Japanese relies on pitch accent to convey meaning, and a flat, robotic voice will immediately sound wrong to anyone familiar with the language.

Whether you’re a streamer adding Japanese narration to your content, a gamer building mods, or an anime fan dubbing clips, understanding how Japanese text-to-speech works will save you hours of frustration and produce results that actually sound convincing.

Why pitch accent matters in Japanese

Japanese is a pitch-accent language. The meaning of a word can change entirely based on which syllables are high or low in pitch. The word “hashi” means “bridge” with one pitch pattern and “chopsticks” with another.

Most early TTS engines ignored this completely. They produced flat output that native speakers found jarring or even incomprehensible in certain contexts.

- Ame (rain) vs. ame (candy): distinguished only by pitch

- Kaki (persimmon) vs. kaki (oyster): same characters, different accent

- Nihon itself has two accepted pitch patterns depending on region

According to a report from the National Institute for Japanese Language and Linguistics, pitch accent errors remain “the single most identifiable marker of non-native or synthetic speech in Japanese.”

How modern AI handles Japanese speech synthesis

Recent advances in neural TTS have changed the game significantly. Models trained on large Japanese speech corpora now handle pitch accent with far greater accuracy than concatenative systems from even five years ago.

Google Cloud’s documentation on their Text-to-Speech API notes that their WaveNet and Neural2 voices for Japanese use “prosodic modeling that accounts for pitch accent patterns at both the word and phrase level.”

The role of SSML and phonetic markup

Speech Synthesis Markup Language lets you manually adjust pitch, rate, and emphasis. For Japanese, this means you can correct accent patterns that the engine gets wrong.

Here’s what you can control with SSML:

- Pitch contour on specific morae

- Pause duration between phrases

- Speaking rate for dramatic or casual delivery

- Emphasis patterns for emotional content

Not every platform supports full SSML for Japanese, though. Check your tool’s documentation before relying on it.

Training data quality separates good from bad

The difference between a realistic Japanese TTS voice and a robotic one comes down to training data. Models trained on read-aloud news clips sound stiff.

Models trained on conversational speech, voice acting, and varied emotional registers sound natural. An analysis from Speechmatics found that “TTS systems trained on emotionally diverse Japanese datasets scored 23% higher in listener naturalness ratings compared to those using broadcast-style corpora alone.”

Practical applications for creators and fans

Now you must be wondering: so what Japanese TTS has natural pitch and accent that are close to a native Japanese speaker? Here’s what you can explore and try for yourself if you’re looking for genuine Japanese TTS.

Anime content and fan projects

Anime fans use Japanese AI voice technology to create fan dubs, parody content, and character voice generators. Getting the right vocal quality matters here because the audience knows exactly what authentic anime voice acting sounds like.

Tools like Typecast’s realistic AI voice generator offer character-style voices that handle the expressive range anime content demands, without requiring voice acting experience from the creator. If you’re specifically looking for character voices, an anime voice generator can streamline the process considerably.

Game modding and indie development

Indie game developers working on JRPGs or visual novels often lack the budget for Japanese voice actors. A Japanese voice generator fills that gap when the alternative is no voice acting at all.

Key considerations for game audio:

- Consistent character voice across hundreds of lines

- Emotional variation for different scenes

- Proper honorific pronunciation (san, sama, kun, chan)

- Natural sentence-final particles (ne, yo, wa, ze)

Streaming and video content

Streamers who react to Japanese media or create bilingual content use TTS for real-time translation overlays, narration, and comedic bits. Flat-sounding Japanese TTS kills the bit. Natural-sounding output keeps the audience engaged.

What to look for in a Japanese TTS tool

Not all engines are equal. Here’s a quick checklist:

- Pitch accent accuracy: Test with homophones like hashi, kaki, and ame

- Emotional range: Can the voice sound angry, sad, or excited?

- SSML support: Can you fine-tune pronunciation manually?

- Voice variety: Male, female, child, elderly options

- Output quality: Minimum 24kHz sample rate for clean audio

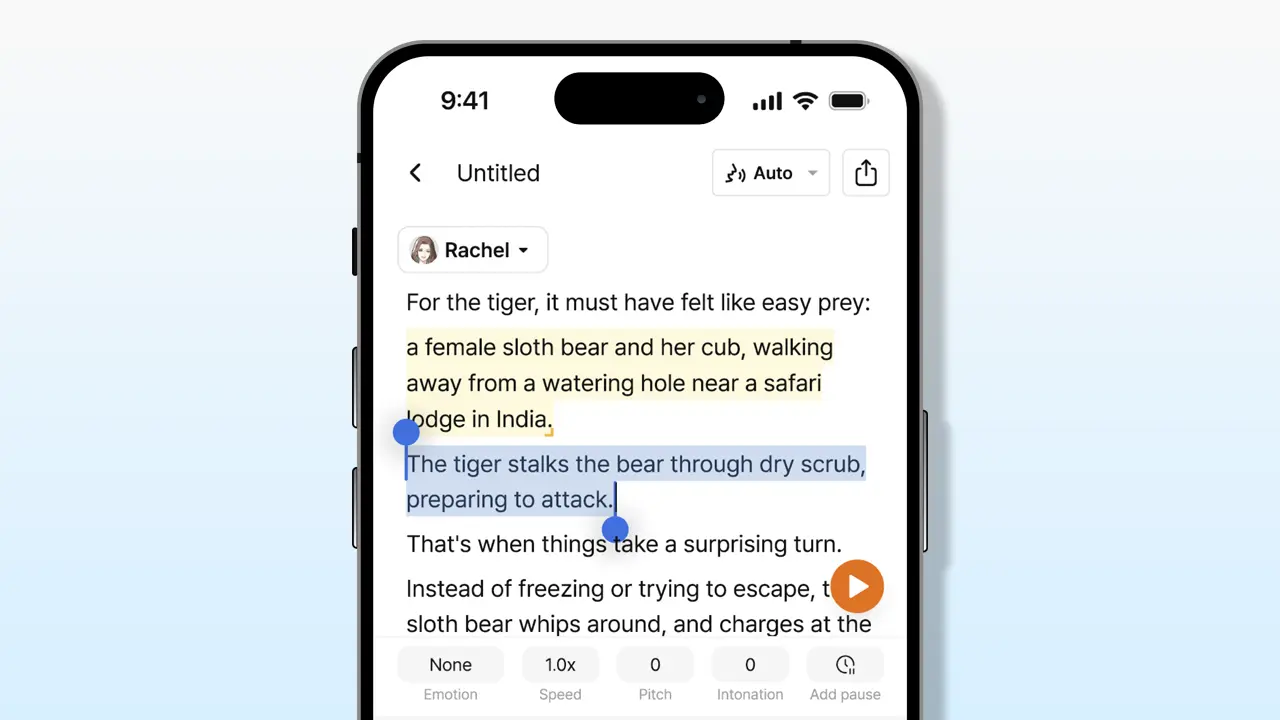

Smart Japanese TTS can now pick up on the context of your script and recommend which emotion fits best. For instance, Typecast’s smart emotion feature lets you click a single button while the AI reads the script and chooses the most appropriate emotion.

The road ahead for Japanese speech synthesis

Expect real-time voice cloning and emotion-adaptive TTS to become standard within two years. The gap between synthetic and human Japanese speech is closing fast, and for most content creation purposes, it’s already narrow enough to be useful.

The creators who learn to work with these tools now will have a clear advantage when the technology matures further.