Cloning a voice and a face of someone, also known as deepfake voice and video, is a controversial technology.

We are in an age where we can no longer tell whether what we see is true or not. Imagine someone tampering with a camera footage after the crime. Do you think the police would then be able to tell if it was a smoking gun?

This is how it works

Deepfake voice can be created by copying the voice of a specific person. A computer program can create a synthetic copy of the voice by learning the person’s voice. Naturally, the better the data the computer is trained with, the more similar the result of the training will be.

The same is true for deepfake videos. As long as there are photos and movies, we can replace a person’s face with something we want. As you can see, deepfake comes from “deep learning,” which means that faces are generated by computers. For example, Obama’s deepfake videos were once famous. Unfortunately, this technology was used in a bad way rather than a good way in many cases.

This is what Neosapience worked on a few years ago. Even though the collected data was not clearly recorded in a studio, they were able to achieve this result.

Not only did they create the voice of Donald Trump, but they also made him speak Korean by using a technology called Cross Lingual Voice Cloning, which allows someone who does not speak a certain language to speak another language with his original voice and tone.

Deepfake maker online

Many AI companies try to demonstrate their technology by making deepfake videos and voices of celebrities, such as celebrity text-to-speech or video and it went viral. It is quite easy to find deepfake makers online and you can easily upload deepfake videos by uploading your videos.

The more the voice resembles the actual celebrities, the more people create a lot of content with them.

Text-to-speech or text-to-speech singing is one of the most entertaining features you can try online.

When used for fun, it is quite entertaining, but unfortunately everything has its two sides, and Deepfake has been involved in many crimes.

Now, the concerns

Despite the fact that many algorithms have been developed to detect Deepfake and it is not difficult to detect deepfake, deepfake is also used for impersonation, financial crime and other types of crime.

On top of that, the easier people have access to deepfake software, the more people have become targets of crime, not just celebrities.

It is entirely up to the people who use the new technology how they use it. What is important, however, is that no matter what the purpose, it should not harm anyone.

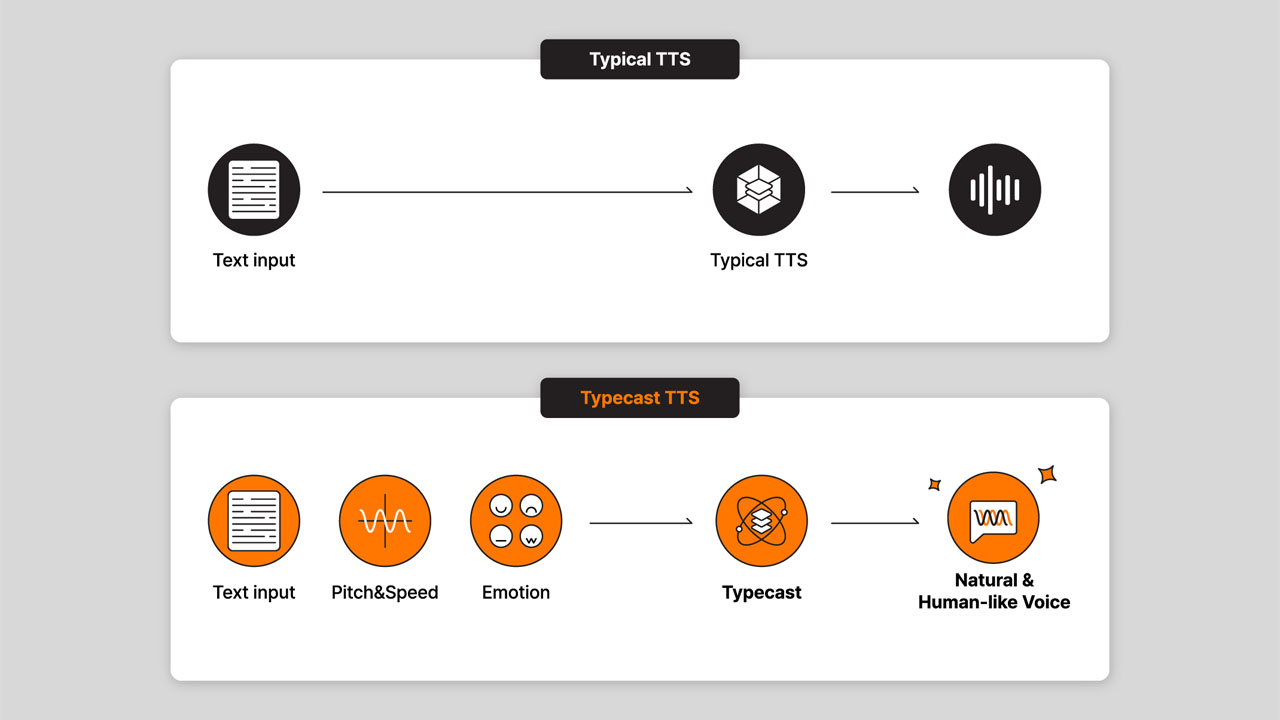

Typecast also offers a video feature that allows anyone to cast virtual actors using its AI voice synthesizer along with its AI video generator. These virtual actors are created with real human actors who are compensated with royalties. We always make sure that the content created with these actors does not violate norms with legal effect, such as other laws, statutes, rules and regulations.